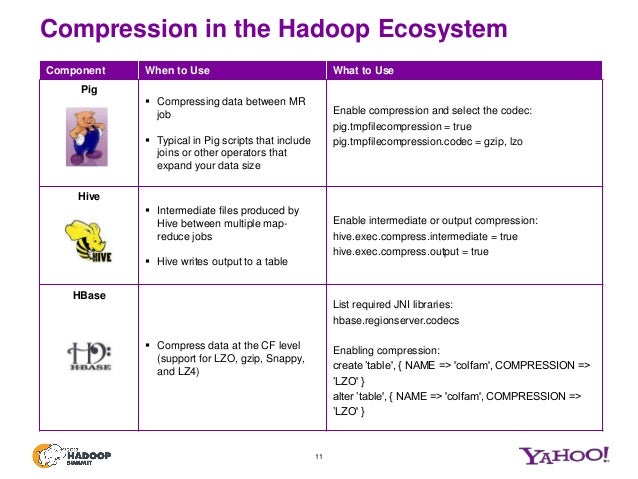

My question here is that SnappyFlows( ) uses a framing format and Snapp圜odec compression is a native snappy library. It complains that: The future returned an exception of type: me., with message Invalid header:Īs a consumer, I definitely do not want to tweak the header. Below is a sample DataFrame we use to create an ORC file. compression, or compatibility with any other compression library instead, it aims for very high speeds and reasonable compression. However, there is an opinion that ORC is more compression efficient. Since we don’t have an ORC file to read, first will create an ORC file from the DataFrame. Snappy is a compression/decompression library. Source: (Apache 2.0 license) I did a little test and it seems that both Parquet and ORC offer similar compression ratios. When I tried to decompress using the flow SnappyFlows which is SnappyFlows#decompress val decompressed = Source.since(rawData).via(compress).runWith(Sink.fold(ByteString())) SNAPPY ZLIB LZO NONE Create a DataFrame Spark by default supports ORC file formats without importing third party ORC dependencies. snappy using Snapp圜press and decompressing it using a scala library whih is SnappyFlows returns different result. INSTALL Makefile.am NEWS README README.md autogen.sh clearcap.map cmakeconfig.h.in comment-43-format.c configure.ac crc32.c crc32.h crc32sse42.c framing-format.c framing2-format. To my surprise, I found out that compressing a raw data into. We need to 1) Segregate error/reject records and put into a separate error file or. I am usually under impression that if the file format is same then each library should have similar logic for decompression/compression. SNAPPY files are a compressed file format developed by Google, where the SNAPPY file is created by the SNAPPY program, which is a file compression and. we are using Parquet file format with snappy compression for ingestion. Then I tried io.圜odec which is a Java library for comp/decomp. To read a Snappy compressed file on HDFS using Parquet, you can use. It supports Snappy compression out of the box, which means that you can read Snappy compressed files on HDFS using Parquet without any additional setup.

Snappy.NET is a P/Invoke wrapper around native Snappy, which additionally. Apache Parquet is a columnar storage format that is commonly used in the Hadoop ecosystem. I initially used SnappyFlows scala library for comp/decomp. Snappy is an extremely fast compressor (250MB/s) and decompressor (500MB/s). S2 can be a drop-in replacement for Snappy but for top performance, it shouldn't compress using the backward compatibility mode.Snappy is a file compression introduced by Google and I am trying my hands on it using scala and java libraries. Encrypted, random and data that is already compressed are examples that will often cause compressors to waste CPU cycles with little to show for their efforts. S2 is also smart enough to save CPU cycles on content that is unlikely to achieve a strong compression ratio. Storage efficiency with Parquet or Kudu and Snappy compression the total volume of the data can be reduced by a factor 10 comparing to uncompressed simple serialization format. S2 aims to further improve throughput with concurrent compression for larger payloads. On a single core of a Core i7 processor in 64-bit mode, Snappy compresses at about 250 MB/sec or more and decompresses at about 500 MB/sec or more. The default compression for Parquet is GZIP. The following example specifies that data in the table newtable be stored in Parquet format and use Snappy compression. Snappy has been popular in the data world with containers and tools like ORC, Parquet, ClickHouse, BigQuery, Redshift, MariaDB, Cassandra, MongoDB, Lucene and bcolz all offering support. For information about the compression formats that each file format supports, see Athena compression support. Snappy originally made the trade-off going for faster compression and decompression times at the expense of higher compression ratios. S2 is an extension of Snappy, a compression library Google first released back in 2011. But, if the payload is already encrypted or wrapped in a digital rights management container, compression is unlikely to achieve a strong compression ratio so decompression time should be the primary goal. If you're releasing a large software patch, optimising the compression ratio and decompression time would be more in the users' interest. The four major points of measurement are (1) compression time (2) compression ratio (3) decompression time and (4) RAM consumption.

Compression algorithms are designed to make trade-offs in order to optimise for certain applications at the expense of others. The Snappy compression format in the Go programming language.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed